Requirements & Planning

- BSP evaluation criteria

- System architecture

- Tech stack selection

- Database design

Bandurra's implementation of Bandurra Agent demonstrates that organizations can achieve cutting-edge AI capabilities without sacrificing data privacy or accepting unpredictable costs.

Organizations handling sensitive documents face significant challenges when adopting AI technology. Bandurra identified several critical concerns that needed to be addressed:

Sending confidential business documents to third-party AI services (OpenAI, Anthropic) poses unacceptable security and privacy risks.

Cloud-based LLM APIs charge per query ($0.002-$0.01 per request), making costs unpredictable and potentially prohibitive at scale.

Dependence on specific AI providers creates risk from service changes, API deprecations, or pricing increases.

Cloud-based solutions fail when internet connectivity is unavailable, limiting deployment scenarios.

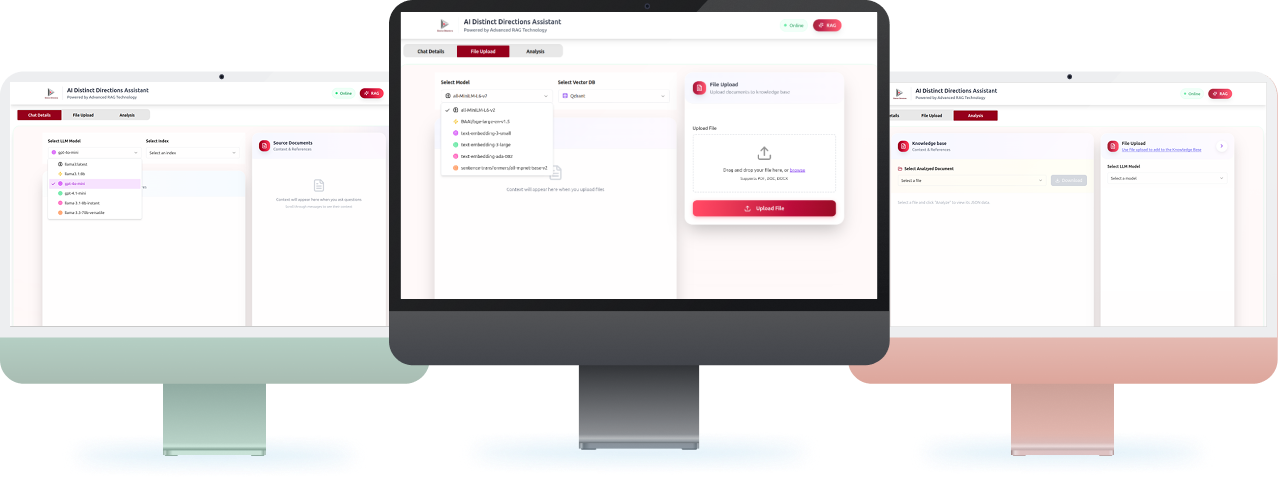

EncureIT Systems partnered with Bandurra to design and implement Bandurra Agent— a comprehensive, offline-first Retrieval Augmented Generation (RAG) platform that processes documents, generates embeddings, and answers questions entirely on local infrastructure.

What the Platform Delivered :

Bandurra now processes sensitive business documents with zero external data transmission. All document content, embeddings, and LLM interactions remain within the organization's infrastructure, ensuring GDPR compliance, meeting HIPAA requirements for healthcare documents, and satisfying SOC 2 audit criteria. The system supports air-gapped deployments for maximum security

By eliminating cloud API dependencies, Bandurra reduced AI operational costs by 70% compared to cloud alternatives. Processing 10,000 queries per month costs $0 (vs. $300-$1,200 with OpenAI). Generating embeddings for 1 million tokens costs $0 (vs. $20-$100 with cloud services). Annual infrastructure costs are fixed and predictable, eliminating budget uncertainty from variable API usage

The offline architecture delivers sub-100ms semantic search and 5-15 second end-to-end query responses. Unlike cloud services, the system experiences zero rate limiting, no external API downtime, and operates reliably even without internet connectivity. GPU acceleration provides 10x faster inference compared to CPU-only processing, with response times approaching cloud service performance.

The platform successfully handles 10,000+ documents with instant retrieval. It supports concurrent usage by 50+ users with the recommended hardware configuration. The modular architecture allows easy model upgrades—switching from Llama 3.1:8b to more powerful models or lighter alternatives (Phi Mini, Gemma 2B) requires only a configuration change. Multiple embedding models and vector databases are supported for deployment flexibility.

Bandurra gained instant access to organizational knowledge through natural language queries. Employees can ask questions about policies, contracts, technical documentation, and research papers—receiving accurate, context-aware answers with source citations. The system supports specialized use cases including Business Sustainability Plan (BSP) evaluation, automated document analysis, and multi-document reasoning.

Want results like these? Schedule a consultation with our team today, and let’s explore how we can bring your vision to life.